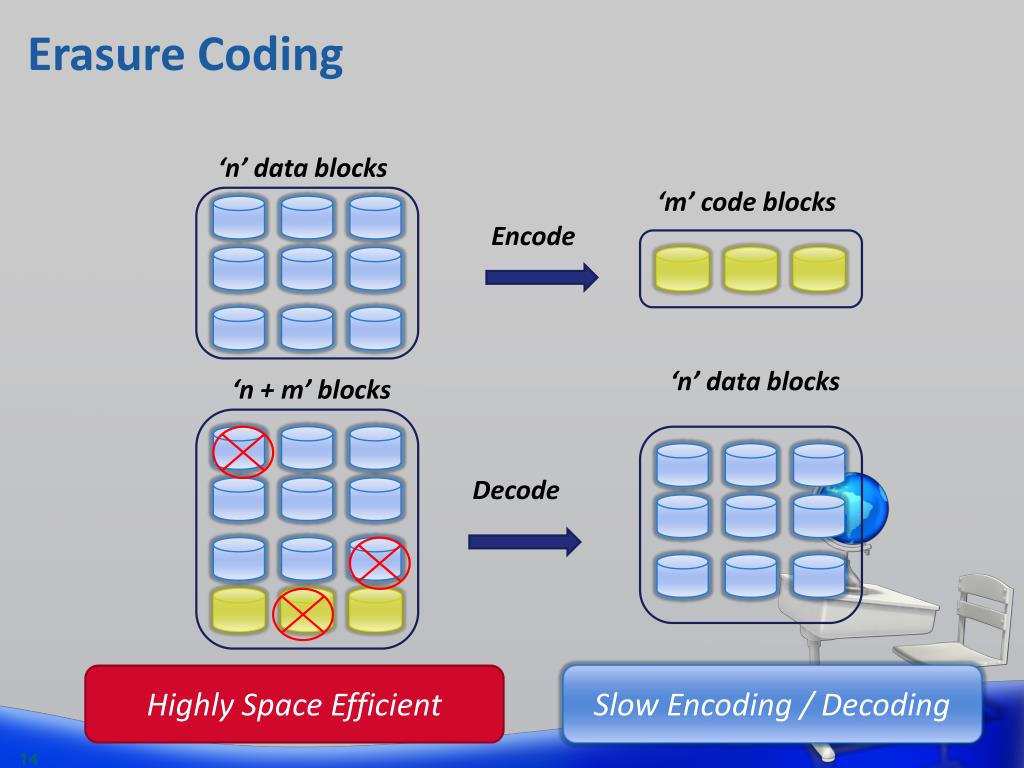

:cherry_blossom: A command-line fuzzy finder The Prometheus monitoring system and time series database. Gogs is a painless self-hosted Git service If you need smashing performance, get yourself some Gin.Ī fast reverse proxy to help you expose a local server behind a NAT or firewall to the internet.Īstaxie/build-web-application-with-golang 37366Ī golang ebook intro how to build a web with golangĪ platform for building proxies to bypass network restrictions. It features a Martini-like API with much better performance - up to 40 times faster. Gin is a HTTP web framework written in Go (Golang). The world’s fastest framework for building websites. Moby Project - a collaborative project for the container ecosystem to assemble container-based systems I’m also of the opinion that fewer features is better than more (look at Kopia - this is what happens when developers are allowed to run unchecked), and that support for *drive storage endpoints (OneDrive, DropBox, Google Drive, etc) should be dropped by duplicacy, and that hot storage is bad (expensive) choice for backup, and that support for Archival storage (Amazon Glacier Deep Archive) is long overdue, etc.Production-Grade Container Scheduling and ManagementĪ curated list of awesome Go frameworks, libraries and software But I’m just another user, so my opinion does not have to agree with any other, including the duplicacy developers’. It’s one of those things that looks cool on paper and fun to implement, but is useless and/or counterproductive in real life scenarios. Also see this: Is erasure coding for Online storage providers recommended? - #2 by gchen Or perhaps local conventional RAID array that cannot recover from bit rot, until one procures a proper appliance. So do you think it was pointless for Duplicacy to add this feature? Is it literally only useful for backing up to a single local drive? If your nas does not support this - replace the nas. Enabling additional overhead in duplicacy just increases your spending on storage and provides no benefits.įor local backup to a NAS - use ZFS or BTRFs filesystem that supports data checksumming and healing. Pretty much every cloud storage provider, including B2, Storj, and many others, already provides such guarantees. So, if the storage is unreliable - the solution is not to add crutches to make it slightly more reliable instead the solution is to switch to reliable storage, that guarantees data integrity, mathematically, not merely on a “best effort” basis. In my opinion, this is a useless gimmick: storage shall guarantee data integrity and it’s not the job of the application to verify that: line shall be drawn somewhere, you have to trust filesystem, ram, cpu correctness otherwise the application will become a full fledged OS. The only usecase for it is if you backup to a single local HDD. This increases the likelihood that backup survives some specific type of media failures. With EC enabled in duplicacy some of the data will be written with redundancy. Someone please help me fully understand the implications of using EC

Are they aware of that command and can split the data across 5 drives?Īm I using more online storage than I needed to, or less? I don’t understand what an EC setting of 5:2 in Duplicacy would mean when that data arrives in Backblaze. However, now I’m wondering if it was somewhat pointless to do on B2, given they use EC on their side already. Given that I wanted to get my large Linux system backup underway asap, I turned on EC upon storage creation in B2 before I fully understood what it was, as I gathered enough to understand it would be more difficult to add later. My question is, what are the implications of enabling it on Online Storage? What are the ideal situations in which you would enable it? For example, say you had a Local NAS, would the NAS be aware of Duplicacy’s EC setting? And would the Data:Parity ratio set on Duplicacy need to match the number of drives in your NAS? I have read the Backblaze Blog regarding EC, and watched some YouTube vids on it too, and I think I have wrapped my head around it now. I’ve been out of the loop with Duplicacy for quite a while, so Erasure Coding was a new feature for me to get my head around upon returning to it. I’m currently in the process of doing a complete system backup of my linux system to Backblaze B2.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed